Fixing Google Search Console’s Coverage Report ‘Excluded Pages’

Google Search Console lets you look at your website through the eyes of Google.

You get information about your website’s performance and details about page experience, security issues, crawling, or indexing.

The excluded part of the Index Coverage report in Google Search Console provides information about the indexing status of your website pages.

Find out why some of your website’s pages are getting the exclusion report in Google Search Console – and how to fix it.

What is the Index Coverage Report?

the Google Search Console coverage report Displays detailed information about the index status of your website’s web pages.

Your web pages can fall into one of the following four groups:

- ErrorPages that Google cannot index. You should review this report because Google thinks you may want to index these pages.

- Valid with caveats: Pages indexed by Google, but there are some issues that need to be resolved.

- ValidPages indexed by Google.

- Excluded: Pages excluded from the index.

What are excluded pages?

Google does not index pages in the Error and Excluded containers.

The main difference between them is:

- Google thinks that pages with an error should be indexed, but it can’t because of an error that you have to review. For example, non-indexable pages submitted through an XML sitemap would fall under “false”.

- Google thinks that the pages in the excluded container should already be excluded, and that’s your intention. For example, pages that are not indexable and not submitted to Google will appear in the Excluded report.

Screenshot from Google Search Console, May 2022

Screenshot from Google Search Console, May 2022

However, Google doesn’t always get it right and sometimes pages that should be indexed go to excluded.

Fortunately, Google Search Console provides why pages are placed in a particular container.

This is why it is a good practice to carefully review pages in all four groups.

Now let’s dive into the excluded bulldozer.

Possible reasons for excluded pages

There are 15 possible reasons why your web pages are in the excluded group. Let’s take a closer look at each one.

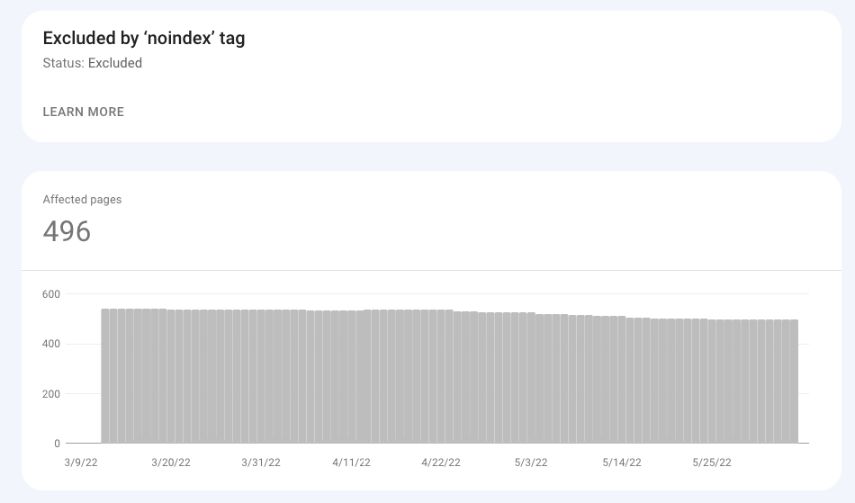

Excluded with “noindex”

These are the URLs that contain the “noindex” tag.

Google thinks you really want to exclude these pages from indexing because you don’t include them in your XML sitemap.

These could be, for example, login pages, user pages or search results pages.

Proposed business:

- See these URLs to make sure of you You want to exclude them from the Google index.

- Check if the “noindex” tag is still/already there on those URLs.

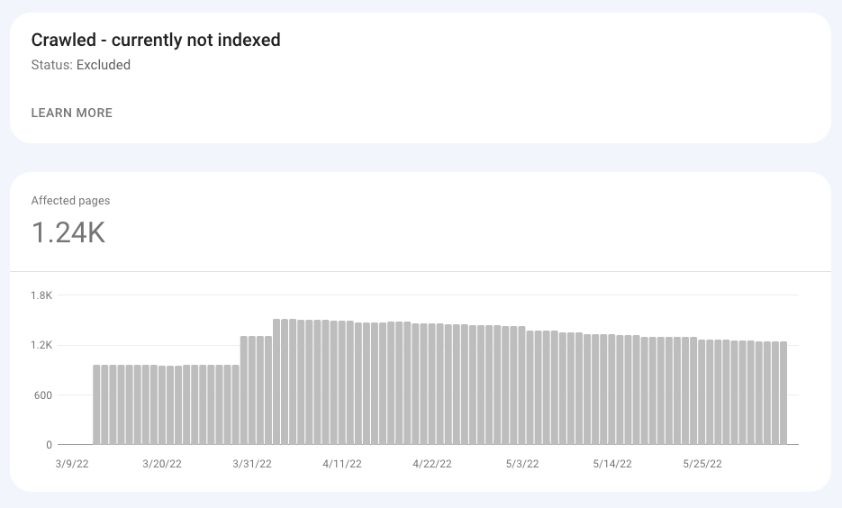

Crawled – not currently indexed

Google has crawled these pages and has not yet indexed them.

As Google says on file documentationthe URL in this collection “may or may not be indexed in the future; there is no need to resubmit this URL for crawling.”

Many SEO professionals have noted that a site can experience some serious quality issues if many normal, indexable pages are listed under “Crawled” – not currently indexed.

This may mean that Google has crawled these pages and does not believe that they provide enough value for indexing.

Screenshot from Google Search Console, May 2022

Screenshot from Google Search Console, May 2022Proposed business:

- Review your website for quality and eat.

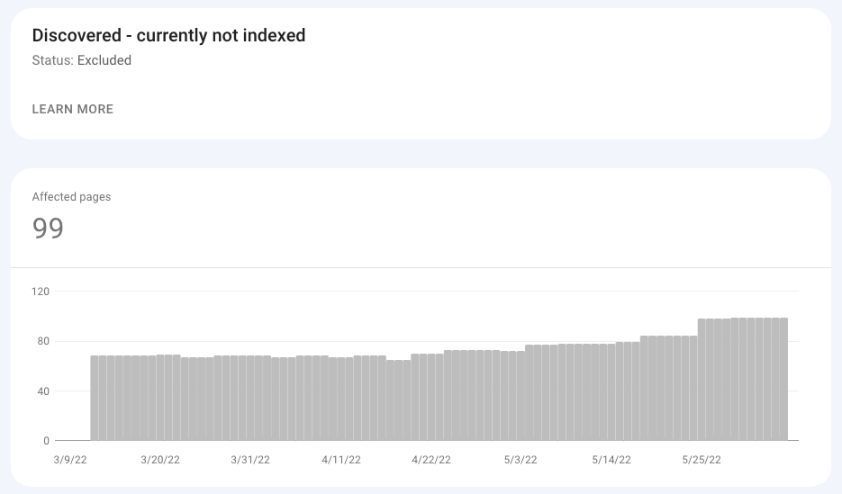

Discovered – not currently indexed

As the Google documentation says, the page under Discovered – which isn’t currently indexed “was found by Google, but not yet crawled.”

Google did not crawl the page so as not to overload the server. Having a large number of pages under this group may mean that your site has budget issues.

Screenshot from Google Search Console, May 2022

Screenshot from Google Search Console, May 2022Proposed business:

- Check your server’s health.

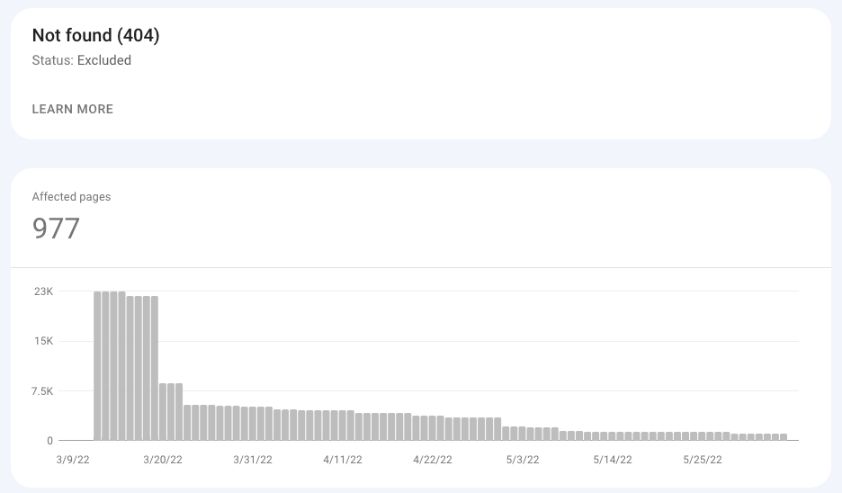

not found (404)

These are the pages that returned the 404 (Not Found) status code when Google requested it.

These are not URLs sent to Google (eg, in an XML sitemap), but instead, those pages discovered by Google (eg, by another website linked to an old page that was deleted a long time ago.

Screenshot from Google Search Console, May 2022

Screenshot from Google Search Console, May 2022Proposed business:

- See these pages and Decide whether to implement a 301 redirect to the working page.

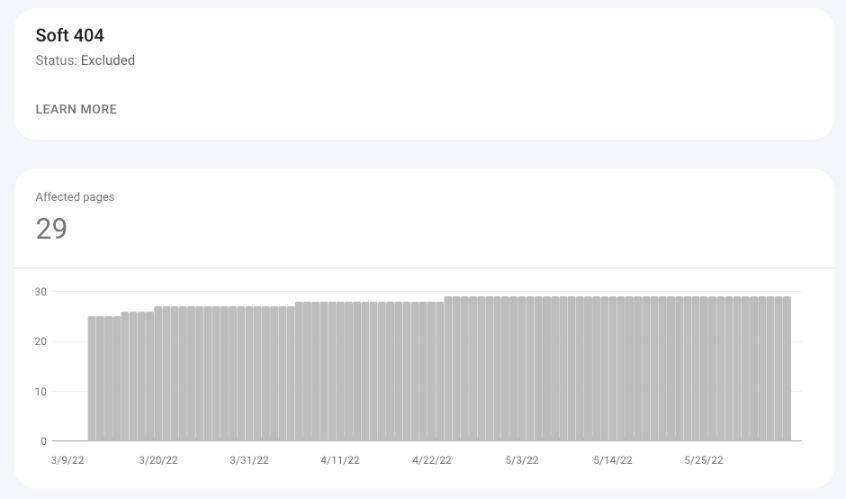

Smooth 404

Soft 404, in most cases, is an error page that displays the OK (200) status code.

Alternatively, it can also be a thin page with little or no content and uses words like “sorry”, “error”, “not found”, etc.

Screenshot from Google Search Console, May 2022

Screenshot from Google Search Console, May 2022Proposed business:

- If an error page exists, Ensure that the 404 status code is returned.

- for thin content pages, Add unique content To help Google recognize this URL as a separate page.

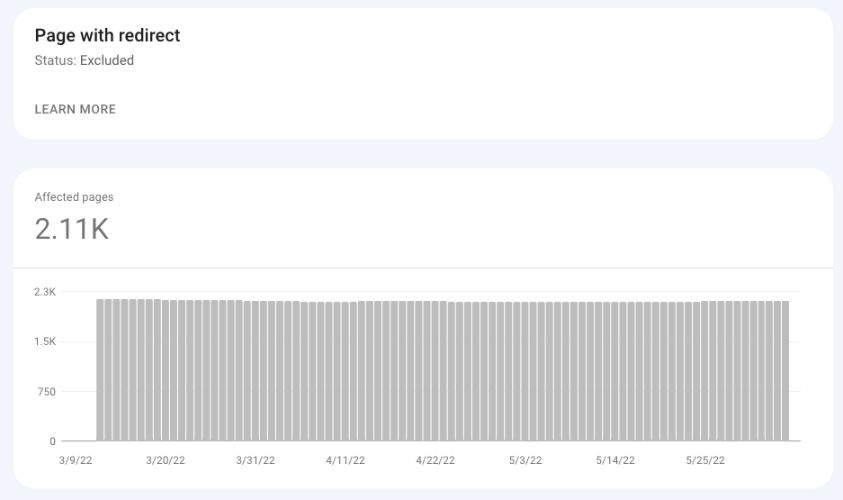

Page with redirect

All redirected pages on your website will go to the excluded container, where you can see all redirected pages that Google has detected on your website.

Screenshot from Google Search Console, May 2022

Screenshot from Google Search Console, May 2022Proposed business:

- See redirected pages To ensure that redirects are implemented on purpose.

- Some WordPress plugins create redirects automatically When the URL changes, you may want to review it occasionally.

Duplicate without specifying the canonical user

Google believes that these URLs are duplicates of other URLs on your website and therefore should not be indexed.

You do not set a canonical tag for these URLs, and Google sets the canonical tag based on other signals.

Proposed business:

- Examine these URLs for canonical URLs Google has chosen these pages.

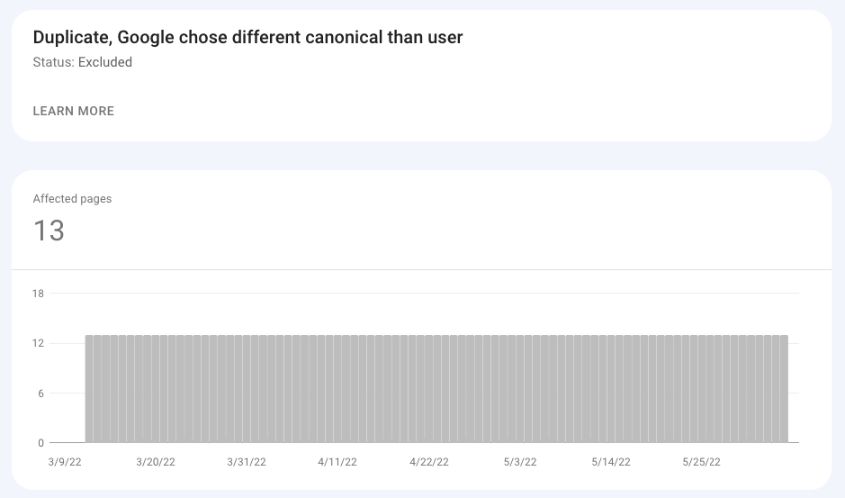

Repeat, Google chose different principals than the user

Screenshot from Google Search Console, May 2022

Screenshot from Google Search Console, May 2022In this case, I declared a canonical URL for the page, but even so, Google specified a different URL as the canonical one. As a result, the canonical page chosen by Google is indexed, and the file selected by the user is not indexed.

Possible actions:

- Examine the URL to check what is essential Google is selected.

- Analyze possible signals that made Google choose canonically different (no external links).

Duplicate URL submitted not marked as canonical

The difference between the above case and this case is that in the latter case, you have submitted the URL to Google for indexing without declaring its canonical, and Google thinks that a different URL would make a better canonical URL.

As a result, the canonical address chosen by Google is indexed instead of the submitted URL.

Proposed business:

- Examine the URL to check what is essential Google.

An alternate page with an appropriate canonical tag

These are just duplicates of the pages that Google recognizes as canonical URLs.

These pages contain canonical addresses that point to the correct canonical URL.

Proposed business:

- in most cases, No action is required.

Blocked by robots.txt

These are the pages blocked by robots.txt.

When analyzing this collection, keep in mind that Google can still index these pages (and display them in a “weak” way) if Google finds a reference to them, for example, on other websites.

Proposed business:

- Check if these pages are blocked Using the robots.txt test tool.

- Add the “noindex” tag and remove the pages from the robots.txt file If you want to remove it from the index.

Blocked by page removal tool

This report lists the pages that the removal tool requested to be removed.

Keep in mind that this tool removes pages from search results only temporarily (90 days) and does not remove them from the index.

Proposed business:

- Check if the pages submitted via the removal tool They must be temporarily removed or they must contain the “noindex” tag.

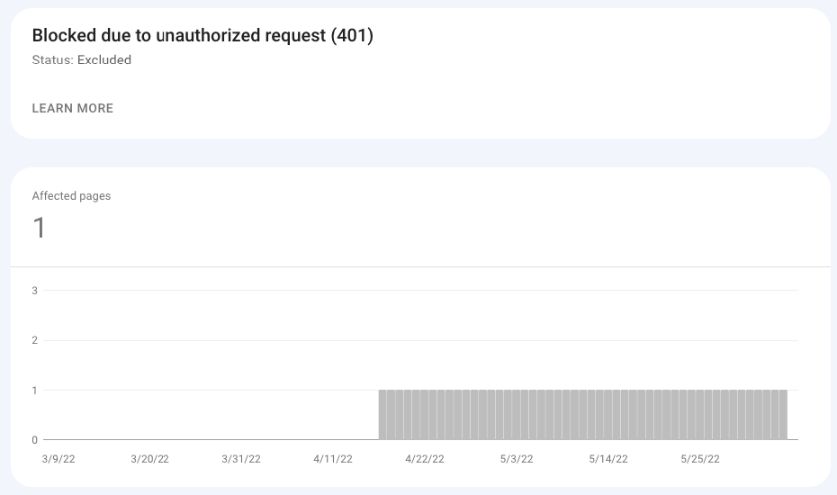

Blocked due to an unauthorized request (401)

In the case of these URLs, Googlebot could not access the pages due to an authorization request (status code 401).

Unless those pages are available without permission, you don’t need to do anything.

Google simply notifies you of what you encountered.

Screenshot from Google Search Console, May 2022

Screenshot from Google Search Console, May 2022Proposed business:

- Check if these pages should already require permission.

Prohibited because entry is prohibited (403)

This status code is usually the result of some server error.

A 403 is returned when the credentials provided are incorrect, and access to the page cannot be granted.

Such as google documents States:

“Googlebot never provides the credentials, so the server incorrectly returns this error. You must either fix this error, or block the page by robots.txt or noindex.”

What can you learn from the excluded pages?

Large and sudden spikes in a particular group of excluded pages may indicate serious problems with the site.

Here are three examples of spikes that may indicate serious problems with your website:

- A large spike of Not Found (404) pages may indicate an unsuccessful migration Where the URLs have changed, but redirects to new addresses are not performed. This may also happen after, for example, an inexperienced person has changed the thread of blog posts and, as a result, has changed the URLs of all blogs.

- High detected – not currently indexed or crawled – Not currently indexed may indicate that your site has been hacked. Be sure to check out the sample pages to check if these are really your pages or were created as a result of a hack (i.e. pages with Chinese characters).

- A high “excluded by noindex” tag may also indicate that the launch and migration were not successful. This often happens when a new site goes into production with “noindex” tags from the staging site.

Conclusion

You can learn a lot about your website and how Googlebot interacts with it, thanks to the Excluded section of the GSC Coverage report.

Whether you are new to SEO or already have a few years of experience, make it a daily habit to check Google Search Console.

This can help you catch many technical SEO issues before they turn into real disasters.

More resources:

- New Google Blog Series about Search Console and Data Studio

- Google I/O Search Console Update: New Report for Indexed Videos

- Advanced Technical SEO: A Complete Guide

Featured image: Milan1983/Shutterstock